What if you had a friend who was fluent in 26 languages and conversational in another 50, who could pass the bar, program at a competent level in dozens of programming languages, hold a lucid and informed conversation on any topic, had nearly every fact about the world — from cytokinesis to the 20 most common Finnish surnames — memorized, and could write cogent essays as fast as they could type, which, depending on the day, was around 100-500 words per minute.

What would you say about this friend and their intelligence?

What if I told you this friend could actually type millions of words per minute, across thousands of different tasks simultaneously? And that they could work 24 / 7 for months without interruption?

What if this friend was young, and just starting out, and you knew they actually had much more hidden potential?

Too good to be true?

Well that’s what we got on March 14th, 2023, when OpenAI unveiled GPT-4, the first truly human-like general intelligence. And what happened next?

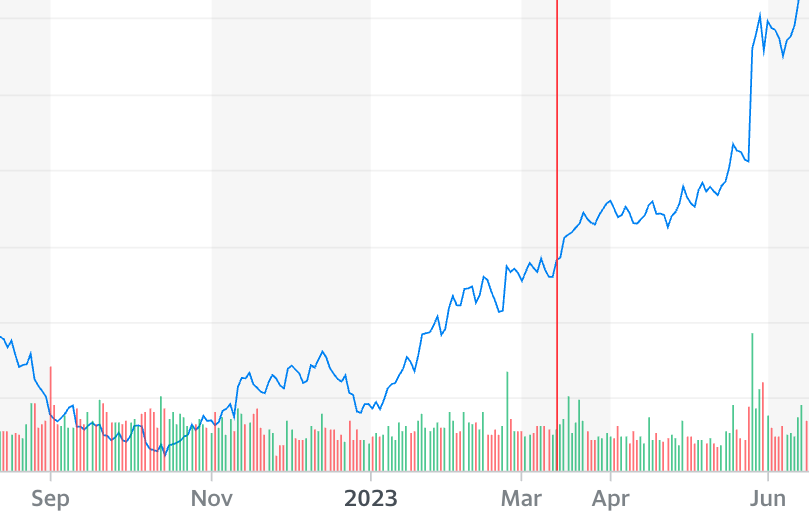

Nothing, mostly. There were a few small articles in mainstream media, lost below the fold. Nvidia, maker of the GPUs that power our “friend”, saw it’s stock rise 10% over the next few days, up 20% by the end of the month, but still well below its previous highs.

Very few, it seemed, fully grasped the significance of the moment. Civilization was about to be upended, human cognition, for the first time, was about to be outcompeted, commoditized. The implications were enormous, as the cost of knowledge work halved and then tenthed and then hundredthed, what would happen to the world economy? How would technological advance accelerate? What would the billions of knowledge workers do? If the cost of new software was 100x cheaper, what would happen to SaaS companies?

Nothing, according to the markets. On March 15th, the Cloud SaaS index did nothing. It wasn’t until three months later, after NVDA’s earnings, when the GPU forecasts made it into public awareness, that there was finally a reaction; even then still a slight one.

Why was the reaction so muted?

Hypothesis #1 - Denial

People are in perennial denial about how good computers are at things. Every time computers have surpassed humans in some cognitive task, that task is sidelined in the “real” definition of intelligence and other tasks are given more weight. Being good at math, or chess, or identifying people, or translating languages — it’s never enough. People cling to a naive, anti-scientific belief that there is something special or irreproducible about human cognition. It’s right there in the name — “artificial” intelligence. This belief wasn’t entirely crazy before LLMs — previous systems clearly lacked a fluidity of general intelligence that made them seem only narrowly useful. With GPT-4 this notion became nonsensical — GPT-4 is good at nearly every task that was previously used to determine a human’s intelligence. It’s still missing pieces — it’s a bit of a disembodied part of a complete intelligence, it is an oracle not a task-competent agent at this point, yes — but the basic underlying knowledge and reasoning skills are human or super-human in so many dimensions.

Hypothesis #2 - Meh

The applications and implications of GPT-4 are yet to be felt. And things must be felt before they are real.

For how mind-blowing GPT-4 is, its primary miracle — generating any possible desired piece of text instantly — is one that Google and the internet had already mastered. It took a painstaking 20 years, and was done in a much more crude way, but it performs the same miracle reliably enough. If you wanted to know what cytokinesis was, the 20 most popular Finnish surnames, translate between languages, or get an answer to a bar question, Google and the vast ocean of internet content were there standing by.

The deep impact of commoditized general intelligence will take years to really be felt, and decades to fully mature, and it hasn’t even arrived yet, just the first signs of it.

Hypothesis #3 - Too familiar

GPT-4 is unremarkable precisely because it is so human-like. Talking to GPT-4 is mostly just like talking to your smart friend. Except it can’t even make a sandwich! Even my dim friend can make a sandwich.

And so here we are

In reality, none of these are good excuses — the market should have moved more after Mar 14th, 2023. Most of the subsequent move of NVDA over the following 8 months should have been realized much sooner. Unless you thought OpenAI was lying about their capabilities (which was low probability), there was at least one obvious and immediate implication: AGI is much closer than we thought, the race is on, EVERYONE will want more GPUs — governments, big tech, small tech.

This lack of reaction is part of what induced me to start this newsletter. We need more thinking about AI and its economic impacts. If we’re asleep at the wheel we risk catastrophe. We need to extend our knowledge of the economic nature of machine intelligence, its possible impacts on society, and its risks. And we need more “definite optimistic” scenarios of what safe AI acceleration looks like, and how we can ensure the flourishing of all humanity in a world of commoditized super intelligence.

Stay tuned.